How to Measure the ROI of AI Adoption?

Seventy-five percent of business leaders believe their competitive advantage will depend on AI. Companies of every size and sector are moving to integrate AI into their operations, and the pressure to act is coming from boards, investors, and competitors simultaneously.

But turning an AI initiative into a real competitive edge takes more than a promising use case and a motivated team. It requires knowing whether the investment is producing measurable AI business value and being able to defend that number in front of a board or an investor committee.

This article walks through the AI ROI metrics that reflect real business impact, how to set them before you build, and what a practical measurement framework looks like when the goal is not just to adopt AI but to profit from it.

Why Most Teams Struggle to Calculate AI Business Value

Companies rarely struggle to find a use case for AI. The difficulty starts when the initiative needs to justify its own budget. A working pilot and a profitable AI deployment are two very different things, and the distance between them is almost always a measurement problem, not an engineering one.

Baseline Before Build

The most common mistake is launching a pilot without documenting what the current process costs. How many hours does a task take today? What is the error rate? What does each error cost downstream? Without these numbers, any improvement stays anecdotal and falls apart in a board conversation.

When Anadea built an AI agent for a US law firm handling disability benefits cases, the baseline was specific: each case required roughly a week of manual review across hundreds of pages of medical documents. After deployment, that review took five minutes at 90% accuracy. The ROI conversation was straightforward because the starting point had been measured before anyone touched a model.

Culture, Governance, Workflow

IBM’s Q4 2025 Think Circle report concluded that culture, governance, workflow design and data strategy are the main constraints on realizing AI ROI. Not model architecture. Not compute. The same research found that 79% of executives see productivity gains from AI, but only 29% can express that productivity in financial terms.

This gap persists because most companies run AI out of the IT department. IT teams optimize for technical performance. Product teams optimize for business outcomes. When nobody owns the connection between AI performance metrics and revenue impact, the value gets created but never captured in a metric that matters to a CFO or an investor committee.

Measuring too Early, Cutting too Soon

Nvidia CEO Jensen Huang has argued the opposite extreme, saying that demanding ROI from early AI work is like asking a child for a business plan on a hobby. For a research lab with unlimited runway, maybe. For a product team reporting to a board every quarter, that is not a realistic position. The goal is not to avoid measurement. It is to pick the right metrics for the right stage of maturity.

What Your Infrastructure Costs Your AI

One factor that rarely appears in AI business cases is the cost of the infrastructure AI has to operate within. When a model has to work around outdated APIs, fragmented data sources and manual handoffs between systems, every integration point adds friction. The model performs well in isolation. The system it lives inside erodes the returns. Teams that address technical debt and invest in proper AI automation infrastructure before deploying AI consistently see better ROI, sometimes by a significant margin, simply because the AI spends less time fighting the architecture.

What Is AI Adoption ROI and Why It Is Hard to Measure

AI adoption ROI measures the financial and operational return a company gets from its AI investment relative to the total AI implementation cost of building, deploying and maintaining that system. The formula is familiar to anyone who has evaluated a technology investment before. But applying it to AI is harder than it appears.

Traditional IT projects have a defined scope, a fixed timeline and predictable outcomes. AI projects rarely work this way. A model that automates document review saves analyst hours immediately, but it also improves decision quality, frees capacity for higher-value work and changes how an entire team operates over the following quarters. Some of these effects are easy to quantify. Others take months to become visible and even longer to attribute to a specific investment.

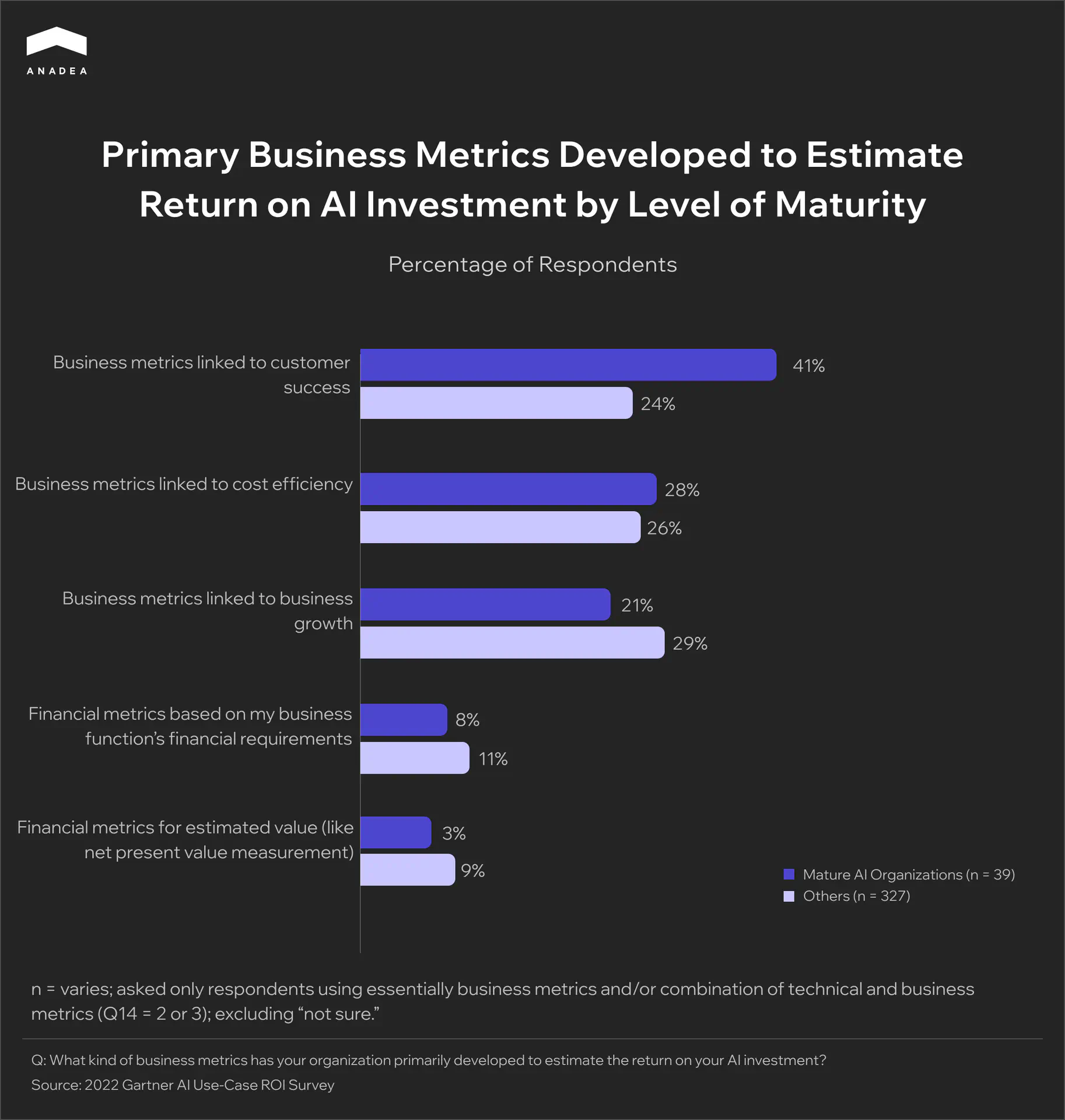

This is why companies at different levels of AI maturity measure ROI differently. A Gartner AI Use-Case ROI Survey found that 41% of mature AI organizations track returns through customer success metrics, while less experienced companies spread their measurement across cost efficiency, financial models and growth indicators without a clear focus.

The implication is practical. Companies that have been through several AI deployments stop measuring purely in terms of cost savings and start measuring in terms of business outcomes. Getting the measurement approach right from the start saves months of internal debate later, when the project needs to justify its next round of funding.

Planning an AI project and not sure the numbers hold up?

Key AI Performance Metrics That Reflect Business Value

Much of what AI produces is indirect and takes time to materialize. The most reliable AI performance metrics are the ones that connect a model’s output to a business outcome a CFO can track on a quarterly basis. If a company uses AI to speed up data analysis so that leadership can make better decisions, the financial result of those decisions may not show up for months. And short-term gains can be misleading. A company that announces AI-driven workforce cuts may see a stock price bump, but that says nothing about how customers and employees will respond over the next two years.

This is why financial analysts split AI ROI into two categories: hard and soft.

Hard ROI

Hard ROI covers tangible effects directly tied to profitability. These are concrete financial outcomes that show up on a P&L statement, can be reported to a board or an investor committee, and tracked quarter over quarter. Hard ROI answers the question that every CFO eventually asks: how much money did this save us, or how much new revenue did it generate? The calculation is usually straightforward once the right data is in place, and this is the category that determines whether an AI project gets continued funding or gets cut. Hard metrics fall into two groups: cost savings and revenue gains.

Primary metrics:

- Labor cost reduction. Hours saved through automation multiplied by hourly cost.

- Operational efficiency. Fewer manual handoffs, less rework, reduced downtime through AI-optimized operations.

- Error and rework reduction. The financial cost of wrong predictions or missed flags, measured before and after deployment.

- Infrastructure optimization. AI-driven resource allocation that reduces cloud spend, energy consumption and storage costs.

- Lead generation and conversion rates. Pipeline growth driven by personalization, better engagement and AI-powered recommendations.

- New revenue streams. AI-enabled products or services that were not viable before the deployment.

- Faster time to market. Shorter development and testing cycles that close the gap between investment and first revenue.

Soft ROI

Soft ROI includes benefits that do not appear as a line item on the income statement but affect the long-term competitiveness and health of the organization. These outcomes are real, executives experience them daily, but they resist simple quantification. They are typically measured through surveys, trend analysis and qualitative assessments over longer periods. Soft ROI rarely justifies an AI investment on its own, but it often determines whether the organization captures the full value of what hard ROI alone would understate. Ignoring soft ROI means undervaluing projects that strengthen the company’s ability to compete, retain talent and make better decisions over time.

Primary metrics:

- Employee satisfaction and retention. Higher engagement and lower turnover when AI removes repetitive, low-value tasks.

- Decision-making quality. More accurate and faster decisions driven by AI-powered analytics, with cumulative impact over quarters.

- Customer satisfaction and loyalty. Reduced churn and higher lifetime value through AI-driven personalization and support quality.

- Organizational agility. Faster response to market changes, regulatory shifts and competitive moves.

- Brand perception and talent attraction. Stronger employer brand and client trust in industries where AI maturity is becoming a selection criterion.

How to Measure AI ROI

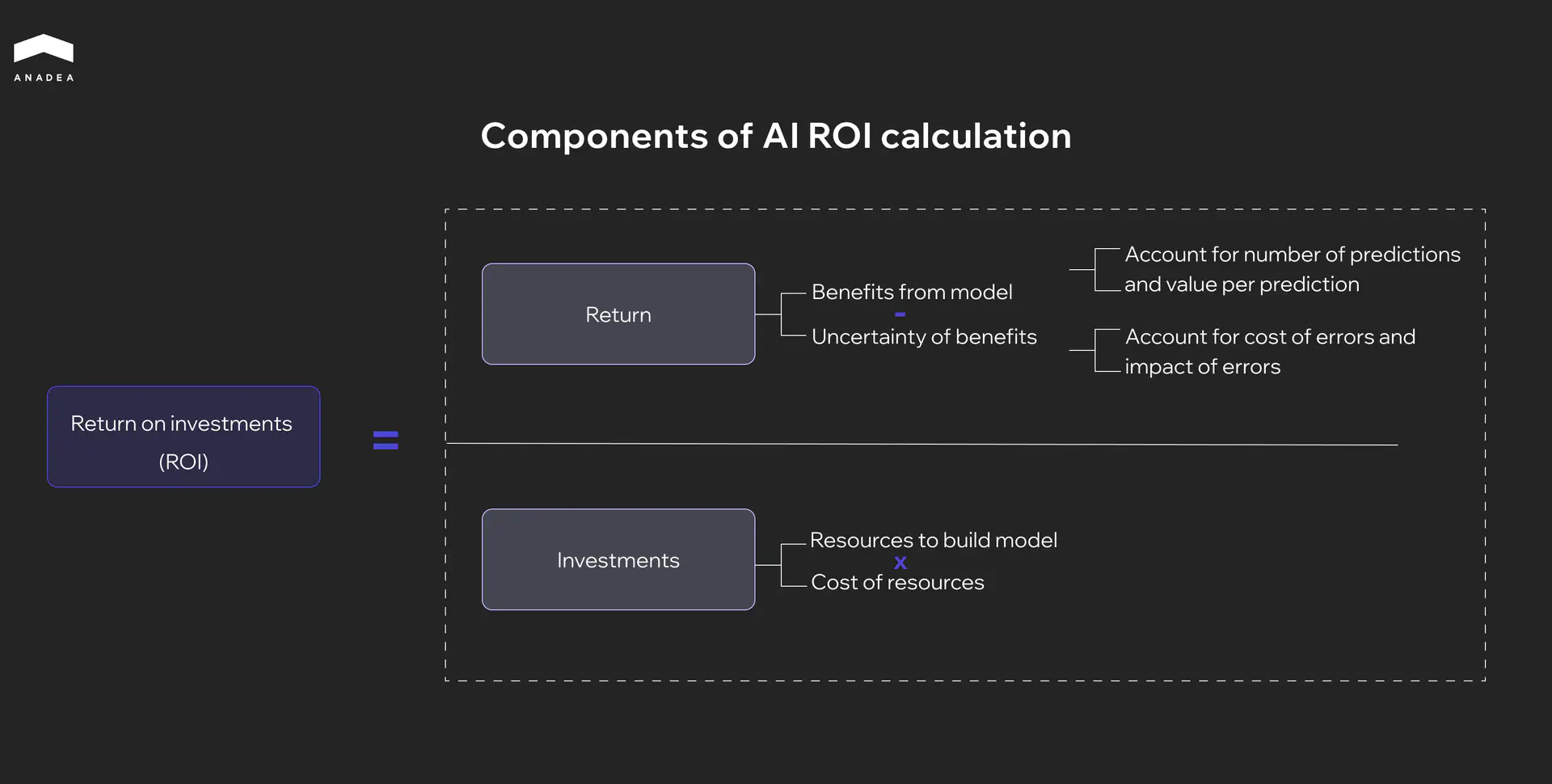

The PwC framework below breaks AI ROI into two components that mirror any investment calculation: return divided by investment. But each side has variables specific to AI that most teams either miss or underestimate.

The Return Side

Return is the benefits a model generates minus the uncertainty of those benefits. In practice this means two things need to be tracked simultaneously.

First, the value the model produces. Every AI system generates outputs that have a business value attached to them. A scored lead, a classified document, a flagged transaction. The measurement here is the number of predictions the model makes multiplied by the average value each prediction creates. A fraud detection model processing 10,000 transactions daily and catching $50K in fraud weekly has a quantifiable return. A document review agent handling 200 cases per month where each manual review previously cost $400 in analyst time has one too.

Second, the cost of being wrong. No model is 100% accurate and every error has a price. A false negative in compliance means a missed violation and a potential fine. A false positive in lead scoring sends the sales team after unqualified prospects. The return side of the equation is only honest when it accounts for what the model gets wrong, not just what it gets right. Teams that report accuracy without attaching a dollar value to errors are overstating their return.

The Investment Side

Investment is the resources required to build the model multiplied by the cost of those resources. This sounds simple, but the undercount here is where most AI business cases break down.

The visible layer includes engineering hours, model training, data labeling, cloud compute and integration work. Most teams budget this part reasonably well at the planning stage.

The less visible layer is what inflates the real denominator. Data preparation and cleanup, which Gartner estimates causes 85% of AI project failures when neglected. Workflow redesign to accommodate new AI-assisted processes. Change management and team training. Ongoing monitoring, maintenance and periodic retraining as data patterns shift.

A model that costs $200K to develop and $15K per month to run has a very different ROI profile at month 6 than at month 18. Including the full AI implementation cost in the denominator from day one is what separates a realistic business case from one that falls apart at the first quarterly review.

Putting Both Sides Together

The practical step is to build both sides of this equation before the project starts, not after. Define what a single AI-generated output is worth. Estimate the error rate and its cost. Map every resource the project will consume over 12 to 18 months, not just the build phase. This gives a baseline ROI projection that can be tested against real numbers as the deployment matures, and a framework that finance teams can work with from the start.

Not sure your AI business case will survive the next board review?

A Step-by-Step Framework

Step 1. Document the Current Process Cost

Before building anything, measure what the process costs today in hours, dollars and error rates. This becomes the baseline that every future ROI calculation is compared against. Without it, any improvement is anecdotal.

Step 2. Define what a Single AI Output is Worth

A scored lead, a reviewed document, a flagged anomaly. Each has a business value. Multiply by expected volume to get projected return per period.

Step 3. Estimate the Error Rate and Price Each Error

Talk to the teams who will deal with wrong outputs. What does a false positive cost in wasted effort? What does a false negative cost in missed risk or lost revenue? Subtract this from the return projection.

Step 4. Map the Full Investment Over 12 to 18 Months

Not just the build phase. Include data preparation, integration, training, workflow changes, cloud compute, ongoing maintenance and retraining cycles. This is your real denominator.

Step 5. Set a 90-Day Pilot with Pre-Agreed KPIs

Pick metrics that can show movement within three months. Processing time reduction, automation rate, cost per output. These are leading indicators. They won’t prove full ROI yet, but they will tell you whether the project is heading in the right direction.

Step 6. Track AI Adoption Success Metrics Separately

Leading indicators like adoption rate, processing speed and data quality scores are the AI adoption success metrics that show early signals. Lagging indicators like revenue impact, total cost savings and payback period take longer to materialize. Evaluating a project by lagging indicators in month two is the most common reason healthy AI initiatives get killed prematurely.

Step 7. Review and Recalibrate Quarterly

AI performance is not static. Models drift, data patterns change, business conditions shift. A quarterly review cycle prevents two equally dangerous outcomes: cutting a project that needed more time, and continuing a project that nobody is willing to honestly evaluate.

Strategies for Improving AI ROI

Knowing how to measure AI ROI and knowing how to improve it are two different skills. The measurement framework tells you where you stand. The strategies below come from patterns we have seen across 60+ AI projects since 2019, and they align with what independent research consistently confirms.

Iterate Before You Scale

Teams that introduce AI in small stages and adjust based on real feedback consistently see better returns than those attempting full-scale rollouts from the start. The logic is practical. Small iterations expose problems early when they are cheap to fix. A full deployment exposes them late when the budget is already committed.

This is why a structured discovery phase matters before any build begins. Two weeks spent mapping the workflow, identifying data gaps and defining success metrics can prevent months of rework later. In our experience, the ROI of an AI project depends more on what happens in those first two weeks than on which model the team selects.

Get Every Stakeholder in the Room Early

Siloed AI projects consistently underperform. When a model is built by engineers, handed to operations and evaluated by finance with no shared context between them, each group optimizes for a different outcome. Engineering celebrates accuracy. Operations worries about adoption. Finance sees cost without clear return.

The projects where we have seen the strongest ROI had one thing in common. Product, engineering, operations and finance were aligned on what success meant before development started. Not as reviewers at the end but as participants in scoping the problem. We have seen this pattern play out especially clearly in AI strategies for private equity investments and portfolio operations, where financial discipline and operational speed have to coexist from day one.

Redesign the Workflow Before Building the Model

The most common path to zero ROI is building a technically impressive model that nobody uses. Adoption fails when the AI is dropped into an existing workflow without adjusting how people actually work.

McKinsey’s 2025 AI survey found that organizations with significant financial returns from AI were twice as likely to have redesigned workflows before selecting a modeling approach. The workflow change came first. The technology followed.

Spend More Time on Data Than on the Model

Rushing past data preparation to get to the model faster is the most reliable way to destroy AI ROI. This is not a new insight, but teams keep making this mistake because data work feels like a delay rather than progress. In every AI project we have delivered since 2019, data readiness determined the timeline more than any other factor. Companies that accept this early plan better and ship faster.

Conclusion

Measuring AI ROI comes down to discipline. Baseline the process before you build, budget for the full lifecycle, and give the project room to prove itself. These steps are what turn a promising pilot into numbers you can defend in front of a board. If you want a second pair of eyes on your AI business case before the budget is locked in, talk to our team. We have been doing this since 2019.

Have questions?

- Automation use cases that replace repetitive manual work usually show returns in three to six months. Projects that require workflow redesign or cross-system integration take twelve to twenty-four months. Applying the first timeline to the second type of project is the most common reason healthy initiatives get cut too early.

- Mature adopters report around $3.70 in value for every $1 invested, with top performers reaching $10. At the same time, 70 to 85 percent of AI projects miss their original ROI targets. The realistic expectation is meaningful returns within eighteen months if execution discipline is high.

- The most reliable setup is shared ownership between a product owner who understands the business outcome and a finance partner who can translate it into P&L terms. Executive sponsorship helps, but daily accountability belongs to the people closest to the workflow.

- Missing baselines. Teams launch pilots without documenting what the current process costs, so there is nothing to compare results against later. The second biggest reason is undercounting the investment side by leaving out data preparation, workflow redesign, and maintenance.

Don't want to miss anything?

Subscribe and get stories like these right into your inbox.

Keep reading

Image Recognition in Healthcare: How AI Is Transforming Medical Diagnostics

Explore how image recognition in healthcare improves diagnostics across radiology, pathology, and ophthalmology. Learn where AI delivers real results and how to build your own solution.

Ethical AI Development: Guidelines, Risks, and What Actually Works

Ethical AI development is now a compliance requirement, not a choice. Learn frameworks, real failure cases, and practical steps to build AI responsibly in 2026.

How to Create an Online Learning Platform: Key Tips

Learn the key steps of eLearning website development to guide you in building your own online learning platform.

Contact us

Let's explore how our expertise can help you achieve your goals! Drop us a line, and we'll get back to you shortly.